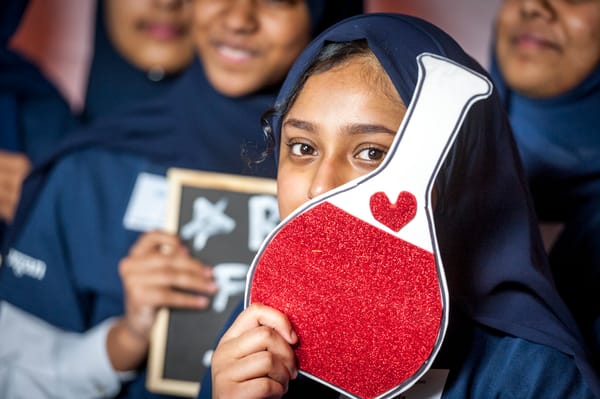

How machine learning could transform the pharmaceutical industry

A conversation with Professor Miraz Rahman, Head of the Department of Drug Discovery at King’s College London.

The use of machine learning (ML) models is particularly exciting in industries whose main issue is their error-proneness: only one in ten drug candidates that enter the (already exclusive) human trial stage make it to market. With an increasing need for new treatments, drug discovery and development are areas where ML is undoubtedly promising. Felix spoke to Professor Miraz Rahman about if – or when – we can expect ML to change the way medicines are discovered and delivered to the public.

Professor Rahman has extensive experience in applying advanced computational methods to understand drug resistance. His work on DNA-binding agents has produced significant outcomes, including patented anti-cancer molecules, contributions to a total of 24 filed patents in the field of drug discovery, and the establishment of two clinical-stage university spin-out companies.

He provided a grounded perspective on the hype, hurdles, and exciting breakthroughs of machine learning in the pharmaceutical industry.

The big picture: the pharma market is very complex

Since the 1950s, drug discovery has become progressively slower and more expensive: the number of drugs approved per billion US dollars halves every nine years, despite rapid technological advancements.

However, machine learning has the potential to be transformative at every stage, as “it could eventually intervene in every step of drug discovery,” Professor Rahman explained.

One of the main money sinks in drug discovery is clinical trials, where the drug is tested for its safety and efficacy in progressively larger patient populations. While ML models are usually considered useful in the earlier stages of drug discovery, ML can also play a transformative role in clinical development by integrating preclinical data with patient population characteristics to optimise trial design, stratification, and endpoint selection. Streamlining clinical trials is where, according to Professor Rahman, significant untapped potential lies: “Even a one-year reduction in clinical trial duration means reducing the cost by tens to hundreds of millions.”

He highlighted the role of machine learning in clinical formulation, the process of developing the final treatment form administered to patients. Beyond identifying a promising drug candidate, it is equally important to determine its ideal dosage, absorbance form, and physiological effects on the body. As Professor Rahman explained, machine learning can integrate clinical and biopharmaceutical data to refine dose selection and patient response more efficiently than traditional empirical approaches. As this stage sits closer to regulatory approval and market entry, optimisation using machine learning carries substantial commercial value, reducing late-stage failure risk, accelerating timelines, and improving the likelihood of clinical and commercial success.

However, he makes the observation that there is still a very long way to go for ML in this context, which isn’t always reflected by the media attention it receives: “Baby steps create a lot of noise – but they are still baby steps.”

Being wrong is underrated

When it comes to machine learning, input quality equals output quality. In drug development, this is reflected in the importance of negative data: that is, cases which do not show the quality the model is trying to identify.

Machine learning models can learn as much from experimental failure as they can from success. For example, training the model with a less biased set of data often means it can more accurately identify false positives. In the drug discovery, this is particularly advantageous in classification tasks like ranking drug candidates by target affinity. Overall, the better the balance between positive and negative data, the better the model will perform when analysing or predicting real-world situations.

Professor Rahman agreed that the overwhelming publication bias towards positive data is a major difficulty for training ML models. He highlighted that the main reasons for this are rooted in the lack of desire from academics and journals to publish such discoveries: “Good headlines make better headlines.”

The power of integration

Despite this bias toward positive data, there is still so much data that it can be hard for ML models to process, even with specialised supercomputers. Professor Rahman considers integrating ML into existing processes to be the most exciting approach.

By directly connecting ML predictions to current pipelines, the applications are more specific, conclusive, and actionable. Professor Rahman explained that we should gradually stitch models into the drug development pipeline, from target identification to clinical trial optimisation. These more complex models can be applied to modelling specific disease responses to drugs, such as changing reactions to antibiotics or the impacts of multicomponent systems which might be observed in vivo like nutrition or competition with commensal bacteria in the gut.

However, Professor Rahman emphasised that “even when we integrate models, we have to respect [the] uniqueness [of] each set of data.” Not all biomedical datasets are interchangeable: each has its own level of noise, bias, size, and biological significance. People training models must consider this variety of metadata to avoid flattening important distinctions and ensure predictions are biologically accurate at each stage.

When and how will ML change the industry?

Professor Rahman explained that machine learning is still at a very nascent state, with wet laboratory work essential to provide the representative, high quality data good models are based on. Wet laboratory work is still necessary as the engine that feeds and validates computational insights. The more sophisticated our models become, the more we must rely on rigorous experimental design and careful data generation to ensure they are grounded in reality. So, while machines will not eliminate the need for laboratories any time soon, scientists must increasingly develop a skill-set that focuses on understanding and critically using these models.

Professor Rahman highlighted that, in this context, we should not fear this change but embrace it and treat it with the respect it deserves. Teaching reasonable use of these technologies in research and their role in creating new knowledge is essential, he explained.

Despite rapid progress, he emphasised that unlocking the real potential of machine learning in drug discovery will require collaboration beyond the private sector. Training better models demands vast, high-quality datasets, shared infrastructure, and long-term investment – resources that are difficult for individual companies to build alone.

He argued that government-led initiatives have a crucial role to play in funding large collaborative research programmes, which would particularly advance the search for responsible and balanced data sharing across industry and academia. “We need better models, and I don’t see how the private sector can do that alone,” he explains.